GPT-4, the latest AI upgrade, has just been released.

Is the world ready to bow down to ChatGPT’s all-powerful text-generation abilities?

In most cases, text generated by ChatGPT can be detected by those familiar with the language patterns and quirks commonly associated with AI-generated content. These might include long-winded sentences, generic word choice or phrasing, occasional lack of coherence, or inconsistencies within the text.

While advanced models like GPT-4 are designed to better mimic humans than previous iterations, they may still have identifiable characteristics that set them apart from human-made writeups. Additionally, there are tools and algorithms designed specifically to detect AI-generated text.

Let’s have a closer look at these methods below.

Visual Signs

For now, you can still rely on your trusty sense of sight to detect potential AI-generated text that may be ‘invisible’ to untrained eyes. Below are the visual indicators to look for when reading articles, documents, or essays to determine whether they are produced via AI or not.

You can also try this game created by Daphne Ippolito, a senior chief scientist at Google, to further sharpen your visual skills in spotting AI-generated content.

Long Sentences, Overly Repeated Words, and Ideas

Long-winded sentences serve as a telltale sign of a ChatGPT-generated text.

This AI chatbot tends to produce excessive words, especially when given a broad question without any specific instructions (prompts).

Sometimes the only way to stop its seemingly never-ending stream of text is to click the ‘Stop Generating’ button below the screen.

Furthermore, ChatGPT’s love for lengthy sentences leads to overused words, such as ‘the,’ ‘that,’ ‘these,’ ‘those,’ and ‘it.’ And a human writer would typically make an effort to minimize such over-repetitions to improve a content’s readability.

In addition, ChatGPT often clutters its output with sentences that have the same idea, giving users a text full of fluff with minimal value to provide.

These lengthy sentences may appear ‘informative’ or ‘well-written’ to most readers. But individuals who deal with text on a regular basis, such as educators and writers, would immediately see these signs as red flags waving in their faces.

Full of Facts But Low on Opinions and Emotions

Having used ChatGPT for months, both for work and play, I can say that one of the most unmistakable signs of AI-generated content is its ‘lack of humanity.’

If given a question without any prompts regarding tone or other characteristics, ChatGPT will definitely generate a fact-heavy text without any personal insights and emotions. In short, the outputs are devoid of the human experience that allows writers to connect with readers.

While such content may be acceptable to the general public, as a writer who reads and produces various articles every day, I can immediately detect a soulless text when I encounter one.

Furthermore, opinions and emotions are what make content more relatable and human, which, unfortunately, the AI chatbot does not have, ー at least for now.

High-Tech Methods

You can now use free and paid high-tech AI detection tools to identify text generated from AIs. These tools typically detect texts generated from the Generative Pre-Trained Transformer (GPT) models such as GPT-3, GPT-2, GPT-J, and GPT-NEO.

You may also check our comprehensive guide about the best AI detectors in the market today.

AI Detection Tools

Various AI detection tools are now available to detect sentences and paragraphs generated by ChatGPT. However, it is crucial to remember that these tools remain imperfect and not the ultimate solution you may be searching for.

One significant weakness they have is their potential to make incorrect inspections, often misidentifying legitimate human-written content as “AI” and vice versa. Therefore, it is essential to use these tools cautiously and to verify the results with human judgment.

It’s like trying to chase a moving target. Every time you come up with something to detect the model, there is an even better model. I don’t think this race is the best way to solve this.”

Muhammad AbdulMageed, Canada Research Chair in Natural Language Processing and Machine Learning

In addition, individuals seeking to circumvent these detection tools can use paraphrasing platforms like Quillbot to make their AI-generated content sound more human. And it works most of the time.

Furthermore, in the near future, the red flags that these detection tools currently hunt may become completely undetectable. Future AI models will certainly become more sophisticated, enabling them to write more fluid outputs and even break grammar rules, much like how humans write.

In addition, the companies that develop these scanning tools, including OpenAI, are transparent in acknowledging that these virtual instruments are imperfect and should not be the sole determinant of AI-written content.

But of course, these things shouldn’t be a reason to get discouraged from using these tools.

Check out the top AI text detectors. For a more extensive selection of ChatGPT detectors, you can visit this directory and explore other tools that match your scanning needs.

Watermarks

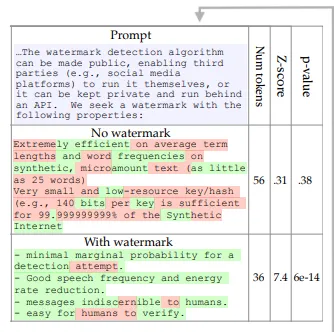

Researchers are currently exploring the possibility of using ‘watermarks’ to identify sentences generated by ChatGPT and other similar AI language tools.

The first type of watermark is essentially a set of guidelines for arranging word patterns. When a text scanner detects that these guidelines have been applied to a text, it would assume that it came from an AI tool powered by a large language model (LLM).

Moreover, AI’s fixation on following rules, such as adhering to grammar rules and writing sentences by the book, is a dire giveaway of its involvement in text creation. In contrast, humans tend to break these rules more often due to their individual writing styles, personal flair, and unique perspectives.

Another type of watermark researchers propose is a virtual stamp that would be marked on articles, documents, and other types of text.

The idea is that when AI language generators ‘scrape’ content from these sources, these marks would appear when their outputs are run through text scanners.

This idea draws inspiration from the concept of marked money, where bills are dusted with fluorescent powder (visible only under UV light) to catch criminals.

Currently, these types of watermarks remain largely a concept, and researchers are somewhat skeptical about their long-term viability, given the rapid advancements in LLMs.

Could We Still Keep Up With AI Content?

The harsh reality we must prepare for is that future language models could soon obliterate every single clue we are currently using to detect AI-generated content.

OpenAI’s GPT 4, which powers ChatGPT, has already caused significant disruption, and even the latest AI detectors are struggling to keep up with its sophistication.

In fact, various platforms have already integrated the AI chatbot into their systems, including Microsoft’s Bing and Snapchat, which dramatically expanded its influence.

In addition, Google’s BERT and Meta’s LLaMA LLMs are also out in the open, designed to compete with ChatGPT and awash us with more text-generation services.

This trend is likely to continue and surge, with more global companies and startups joining the race to create the most sophisticated language models.

Competition is Both Our Doom and Hope

The competition, as they say, keeps the market alive and benefits the consumers. But LLMs’ race may prove to be a massive obstacle in our attempt to catch machine-generated content, as they get more sophisticated at every turn.

But on the bright side, the competition itself might also offer us the chance to keep up with AI’s continuous evolution.

How? If ever the demand for AI detection tools significantly rises in the future, this could open a new race to develop advanced scanning tools that can actually keep up with the latest LLMs.

This trend may or may not come, but this could be our best shot to catch up with the ever-evolving language models.

Also check out: How to Spot ChatGPT-Generated Text Easily

Join our newsletter as we build a community of AI and web3 pioneers.

The next 3-5 years is when new industry titans will emerge, and we want you to be one of them.

Benefits include:

- Receive updates on the most significant trends

- Receive crucial insights that will help you stay ahead in the tech world

- The chance to be part of our OG community, which will have exclusive membership perks